Reigning Pwn2Own champion: “The main thing is not to install Flash!”

03/03/2010With the Pwn2Own hacking contest coming up at Vancouver’s CanSecWest security conference later this month, Italian computer security blog OneITSecurity took some time to interview Charlie Miller. Miller, in case…

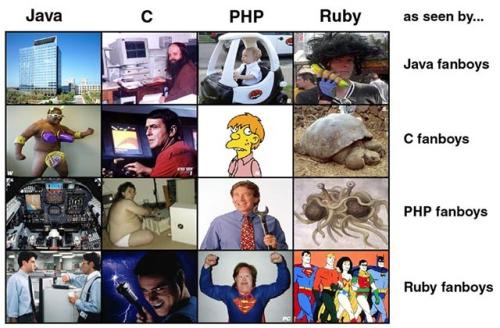

How Programming Language Fanboys See Each Other

27/12/2009Holiday Fun: How Programming Language Fanboys See Each Others’ Languages.

Windows x64

03/02/2009I’ve re-installed windows on my computer this week – (Reverted back from Windows 7 to Vista x64) so I had to install all the software I’m using. I’ve never used…

Tips for maintainable Java code

08/06/2008There are two ways of constructing a software design. One way is to make it so simple that there are obviously no deficiencies. And the other way is to make…

Is Java a pure Object Oriented Language?

05/10/2007Someone asked me if Java was a pure OOL (Object Oriented Language) or not. Without thinking my answer was yes, “thanks to the incompetent university I went to for 4…